How to crawl a Noindex Nofollow site with Screaming Frog

Configure Screaming Frog to crawl a site that is set to not allow bot crawling.

What is the no index / no follow site settings

The “noindex” and “nofollow” site settings are directives that can be implemented in the HTML code of a webpage to instruct search engines on how to handle the page and its links:

- Noindex: This directive tells search engines not to index the content of the page. In other words, the page won’t appear in search engine results pages (SERPs). This is commonly used for pages that are not intended to be publicly visible or for duplicate content that shouldn’t be indexed.

- Nofollow: This directive tells search engines not to follow the links on the page. It means that search engine crawlers won’t pass authority or PageRank to the linked pages, and those pages won’t benefit from being linked to from the “nofollow” page.

Combining both directives, “noindex, nofollow” is often used for pages that the website owner doesn’t want to appear in search results and doesn’t want to pass authority to other pages through links on that page.

These directives are typically implemented using meta tags in the HTML code of a webpage. For example: ` ```

Or they can be set through the robots.txt file or with HTTP response headers. They are useful for controlling how search engines interact with specific pages or sections of a website.

How to bypass the nofollow when crawling a site with Sceaming Frog

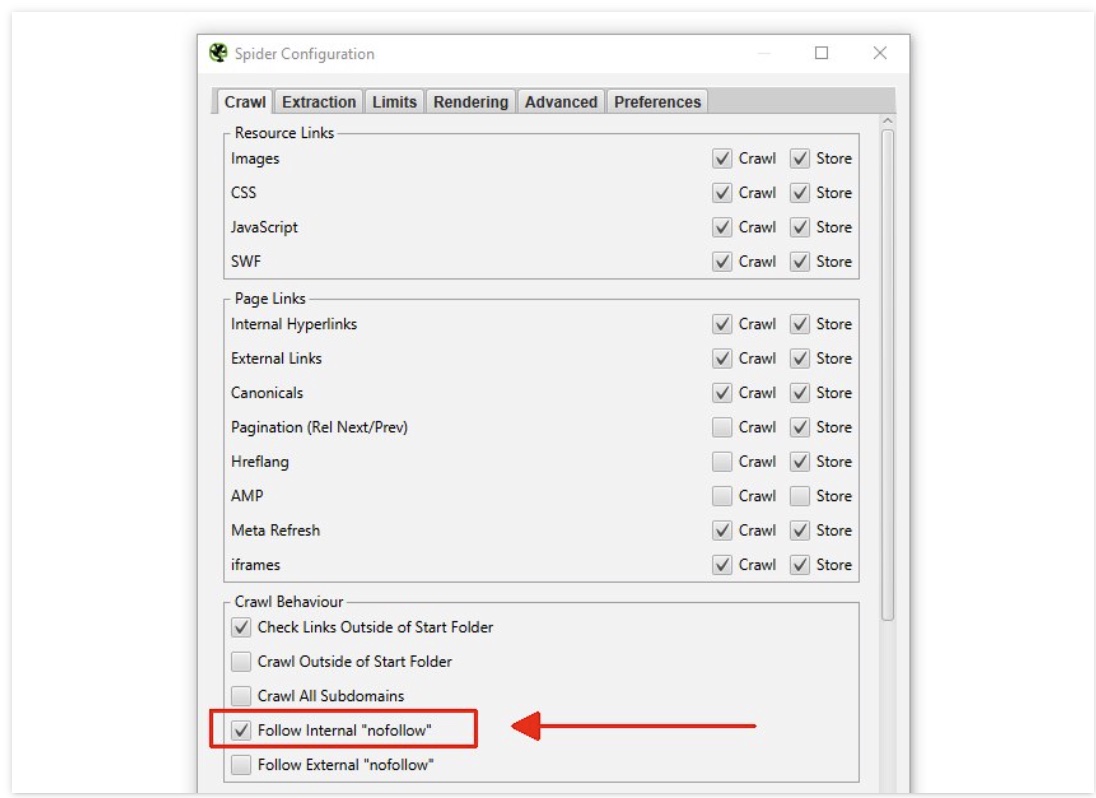

- Go to ‘Config > Spider’

- Scroll down to “Crawl behaviour”

- enable ‘Follow Internal Nofollow’

How to bypass the noindex

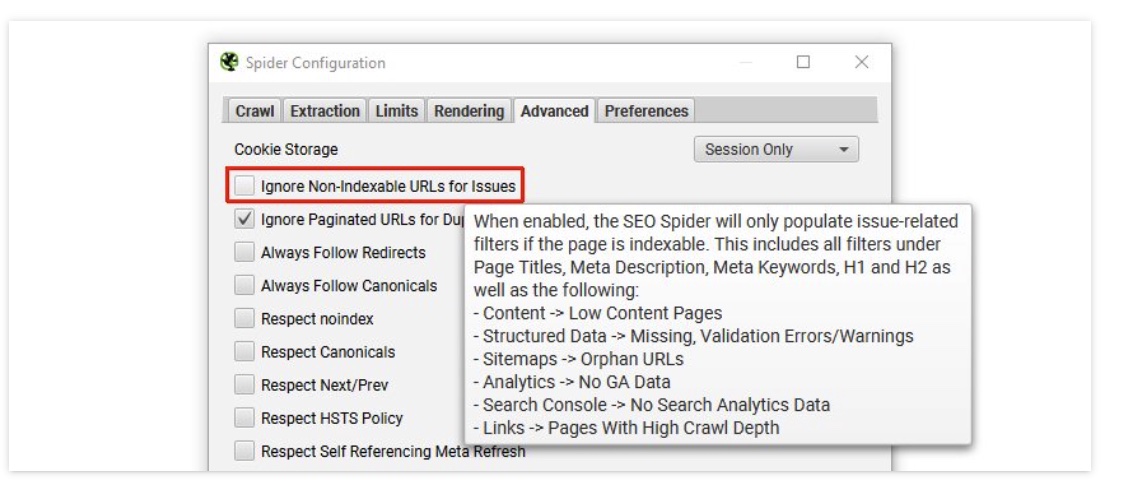

- Go to ‘Config > Spider > Advanced’.

- Uncheck Ignore Non-Indexable URLs for Issues

Now you can Crawl a site set to noIndex NoFollow

How to bypass robots.txt

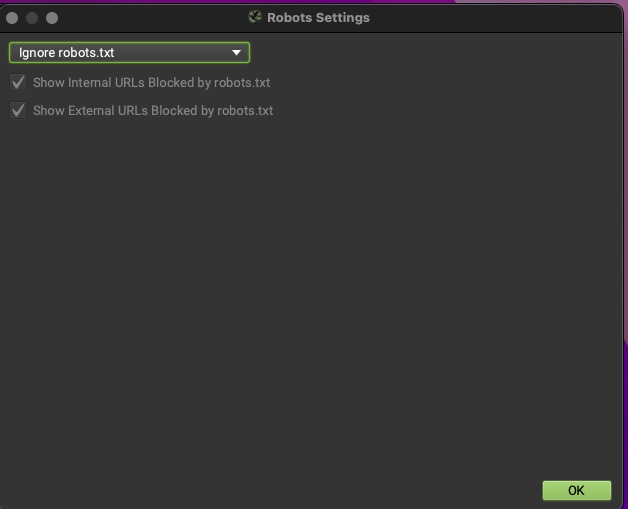

Bypass the nofollow When the nofollow has been added to the Robots file.

- Go to ‘Config > Robots.txt > Settings’

- Choose ‘Ignore robots.txt’.